A Correspondence Between

the Denotational Semantics of Programming Languages

and the Process of Code Generation

the Denotational Semantics of Programming Languages

and the Process of Code Generation

Dr. Martín Raskovsky - 1982

The formal aspects of the syntax and semantics of programming languages, together with their underlying theories, have proved to be very useful for systematically writing, or mechanically generating, implementations for these languages.

For example, the style of description of ALGOL6O suggested to E. Irons [Iro61], that the formal syntactic description of the language could be used to control the compiler for the language directly. Shortly after, the term 'compiler-compiler' was already being used by R. Brooker [Bro62] [Bro63], however, [Bro60] describes an 'autocode to write autocodes'.

The micro-syntax of a language - how words are formed - is in general specified by a regular grammar (RG), whose associated implementation system is a finite state machine (FSM). The theory of regular languages and finite automata was developed in the early 1950's and is therefore one of the oldest branches of theoretical computing science. On the other hand, the syntax of a language - how words are put together into sentences - is in general specified by a context free grammar (CFG), whose associated implementation system is a push down automaton (PDA). The early 1960's witnessed a tremendous growth in language theory, the Chomsky hierarchy has been extensively studied by many people. Finally, the semantics of a language - what a sentence means - is for our purposes specified by abstract mathematical entities, a system of functions whose associated implementation system is the λ-calculus (LAM). This method, Denotational Semantics (DS), originated in the late I960's. C. Strachey in his early paper 'Towards a formal Semantics' [Str66], showed that with the introduction of a few new basic concepts it was possible to describe not only the applicative, but also the imperative parts of a programming language in terms of applicative expressions [Lan65]. The result of Strachey's joint work with D. Scott, which started in 1969 [Sco70], was the construction of λ-calculus models and reflexive domains: The main point is the treatment of functions as the representation of the meaning of programs, rather than the syntactic or operational representation used thus far.

Analogy

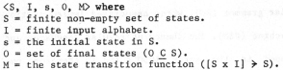

The three implementation systems: FSM, PDA and LAM, can be regarded as input programs to a compiler generator system whose output can be interpreted by a direct simulation of each underlying machine. For example, from the definition of a particular FSM:

one can implement a lexical analyser by a simulation of M (also by adding a set of actions, an error state and a set of declarations global to every action). This approach to automatic generation of lexical analysers is extensively described in [Lew79].

one can implement a lexical analyser by a simulation of M (also by adding a set of actions, an error state and a set of declarations global to every action). This approach to automatic generation of lexical analysers is extensively described in [Lew79].

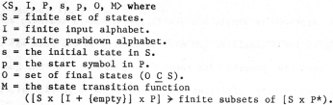

From the definition of a PDA:

one can implement a parser, by a simulation of P and M, (also adding actions, error state, declarations, attributes values and attributed

pushdown symbols). Syntax directed translation has been known for quite a long time, [Iro61], [Rnu68], [Lew68], [AaU69], [AaU72] and [Lew79] are just

a few references to the subject.

one can implement a parser, by a simulation of P and M, (also adding actions, error state, declarations, attributes values and attributed

pushdown symbols). Syntax directed translation has been known for quite a long time, [Iro61], [Rnu68], [Lew68], [AaU69], [AaU72] and [Lew79] are just

a few references to the subject.

From the DS definition of the semantics of a programming language in LAM:

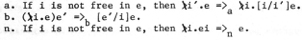

can directly implement the conversions of the λ-calculus:

can directly implement the conversions of the λ-calculus:

Pioneer work in the area of compiler generation from denotational semantics, was carried out by P. Mosses [Mos75] [Mos76] [Mos78], whose system known as SIS (Semantic Implementation System), uniformly translates DS equations into LAM, and then runs an interpreter over this 'code'. Also, later work by [Jon80], [Gan80], [Ras80], [Pau81], [Hen82] and [Set82] has shown that semantic directed compiler/interpreter generation out of DS is a young and promising area of research.

Mosses's approach is simple and general; He treats a semantic specification as a program for a simulated LAM machine exactly in the same way as an RG or CFG is seen as program for respectively an FSM or a PDA. The result is, however, not always efficient and practical. In particular, the lack of efficiency of SIS reminds one of an analogy between the systems devised to efficiently implement an FSM or a PDA; they are not necessarily a simulation of the underlying automaton. In the same way, one can think of an implementation of LAM which does not necessarily call for a lambda interpreter. The particular problem with a semantic specification is that it refers to a run time activity, as opposed to a syntactic specification which refers to a recognise and parse activity. At run time efficiency matters become crucial.

An alternative approach for achieving an efficient and practical implementation is to use the formal specification to 'derive' or 'generate' a 'program', written in a systems programming language. From an RG one can generate a scanner, from a CFG a parser and from a DS a code generator. These three programs perform each a function which can be expressed as:

scanner:[CHA -> SYM]

parser:[SYM -> TRE]

translator:[TRE -> COD]

where

CHA = the representation of the source program as a character string.

SYM = the internal representation as a sequence of symbols.

TRE = the internal representation in the form of a tree.

COD = the target code.

In our research, the missing function checker:[TRE -> TRE] (to type check and solve the context sensitive aspects of programming language) is embedded in the code generation phase.

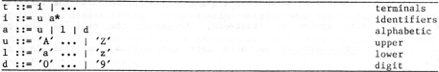

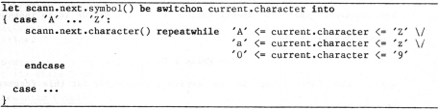

Consider as an example, the RG specification of IDENTIFIERS as an instance of a lexical specification:

The first step in a derivation of a scanner is to generate a recogniser:

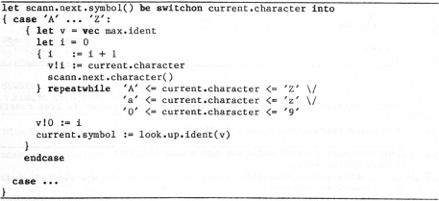

Next, one can inject simple implementation techniques to transform it into a scanner:

Note that this scanner, derived from the original RG specification, makes reference to a symbol structure via the call of the function look.up.ident whose specification was not given and is left open for an implementer to design according to his own implementation choice. Also the interface to an operating system or front-end, throughout the call of the procedure scann.next.character is also left unspecified, so that in a different environment, or different hardware configuration, an implementer can choose the appropriate implementation.

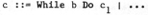

Now consider the CFG specification of a WHILE-LOOP as an instance of a command definition:

Again, the first step is to derive a recogniser for this fragment, for example:

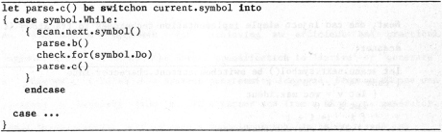

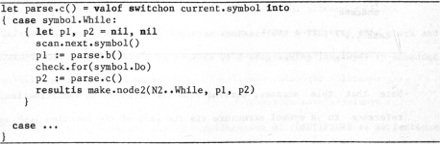

Next, this recogniser can easily be made into a parser (a tree constructor) by adding appropriate action routines:

In this parser, one must note again, how the structure of the internal representation of the syntactic structure of a program is not specified. Again this leaves crucial implementation details open for an implementer to decide. So in this respect, we are arguing for a generation of structured and efficient compilers.

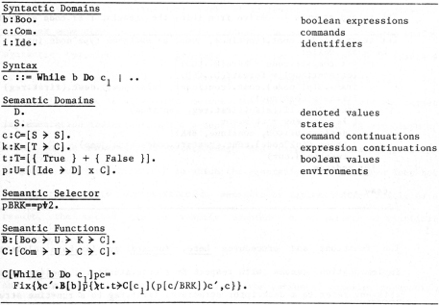

Finally, consider the semantic specification for the same fragment:

This definition is far more complex in its structure and information content than the syntactic one above. Though at first one may have the impression that the relationship between this semantic specification and a procedure to generate code for the same fragment is hopelessly unrecognisable, in fact, there are many systematic relationships between both. This relationship is important to anyone who wishes to study semantic or implementation structures.

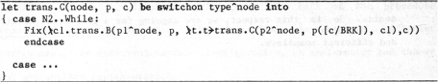

As an example of the correspondence that we are proposing here is the first step; The derivation of the skeleton of a translator:

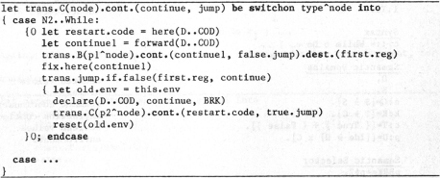

The second step is to derive from this, the translator or code generator:

The functions and procedures here, forward and fix.here relate to open implementation issues with respect to a code structure; declare, reset and this.env refer to a descriptor structure; first.reg to a run-time structure; trans.jump.if.false to a code generator interface; trans.B is a procedure which is expected to be generated accordingly to the DS specification for boolean expressions; And finally node, type, p1 and p2 refer to a tree structure.

Our contribution

The generation of an efficient and practical program out of the formal specification of the syntax (or lexical) issues of a programming language is a solved problem. However, the generation of an efficient translator out of a semantic specification in the form of a denotational semantics is not. This thesis: A Correspondence Between the Denotational Semantics of Programming Languages and the Process of Code Generation, has grown out of experience in the engineering task of implementing a semantics directed compiler generator. As will be quite evident, the method, as with the analog syntactic problem, is to generate a program, written in a systems programming language, by transforming the semantic specification. The treatment of the relationships, between the source semantics and the target implementation indicates clearly a dependency upon the form and structure of both source mathematical metalanguage and target systems programming language. However, the manner in which the generation is formulated does not adhere rigidly to any particular semantic or implementation model. As a result, the method can be readily adapted to a variety of practical situations.An essential feature of this thesis is the series of compiler generation examples presented, in general taken from J. Stoy's "Denotational Semantics: The Scott-Strachey Approach To Programming Language Theory" [Sto77]. This is designed to make the format more approachable to anybody familiar with this excellent account of denotational semantics.

This thesis will show how one can systematically derive, or automatically generate, the code generation phase of compilers for languages like those given as examples of semantic definitions in [Sto77] or as complex as GEDANKEN [Rey70j.

The novel aspect of our contribution is our method of analysing a denotational specification. From a purely mathematical point of view the way that functions in some abstract space are described is irrelevant. But if one is to process this specification automatically, then the particular representation of these functions is important. We call this representation the concrete semantics. Concrete semantics can be interpreted algorithmically. This is what P. Mosses did by a direct interpretation of LAM. Our approach is characterised by the observation that under an appropriate algorithmic interpretation, functions and domains of a concrete semantics, can be regarded as procedures and data-types. Such interpretation amounts to establishing a correspondence between functions and procedures and therefore also between domains and data-types,

The structure and operation of the automatically generated (or systematically derived) code generator, which is written in the systems programming language BCPL, is in effect very similar to the one we might have produced by hand. The only hand coding is the interface for the particular target machine, required for both the primitive functions of the original specification and those introduced by the generation. For the latter, our system provides a library of routines to generate code for the DEC-10 system, so that in all the examples we tried, we only had to hand code the former. The parser is separately generated with an LL1 system [Suf78a] which generates procedures in the same systems programming language.

Our research is not directly concerned with the problem of correctness. We are mainly interested in the correspondence between a denotational specification and the process of code generation. We are also interested in showing that this correspondence can be used to produce a compiler-compiler, and that the code generators obtained by such a system produce efficient code.

In the following sequence of operations:

- Given a transformational system T and a denotational specification S of a programming language L.

- Using T, generate from S a code generator G for L.

- Using G, compile a program P (in L) producing code C for the DEC-10.

- Running C over some input I obtaining an output 0.

However we do appreciate the need for correctness proofs. So among the lines given for future research, we indicate how we might proceed to prove the correctness of the automatically generated (or systematically derived) code generators.

The Form of this Thesis

In Chapter 2 we introduce a mechanism of transformation, a production rule system to transform semantic equations into procedures of the programming language BCPL [Ric79]. Chapters 3 to 5 each analyses a different programming language example (from [Sto77]) with increasing levels of difficulty. The main features are respectively: state, environment and continuations. Chapter 6 looks at the Lambda Calculus, defined using both direct and continuation semantics, both with call by value and name. Chapter 7 describes a different approach: instead of starting with a standard denotational semantics, we study the possibility of abstracting implementation ideas at a denotational level, thus starting with an implementation denotational semantics. The objective of this exercise is the generation of more efficient code generators and also to provide the grounds for correctness proofs, as we have shown in [Ras80]. Finally, Chapter 8 looks at some future directions of our research.

Appendix A briefly describes the implementation of a system called ISL (Implementation Semantic Language), which has automatically generated all the examples shown in this thesis ([Ras79] [Ras80] [Ras81] [Ras82]). In Appendix B, we have collected together all transformation rules. Appendix C defines the operators used in the source and target metalanguages. Appendices D and E show the two main examples, Stoy's example language and GEDANKEN.

Text of the thesis

This concludes our online introduction. The entire thesis text can be

viewed as pdf or downloaded as zip/pdf

Please leave your comments in my guestbook